Introduction: The Real Bottleneck in AI Adoption

Every week, another headline announces a breakthrough in artificial intelligence. New models, faster chips, smarter automation. The technology is advancing at a pace that was unthinkable just five years ago.

Yet despite all this innovation, most organizations are struggling to make AI work for them in a meaningful, lasting, and trustworthy way. Projects stall. Pilot programs never scale. And in some cases, AI systems cause real harm — to employees, customers, and communities.

The reason is not the technology.

AI transformation is a problem of governance. The organizations and governments that succeed with AI are not necessarily the ones with the biggest budgets or the most advanced tools. They are the ones that have answered the harder questions: Who is responsible when AI gets it wrong? How do we ensure our systems are fair? What rules guide how AI is built and deployed?

This article breaks down what that means, why it matters, and what you can do about it.

What Does AI Transformation Actually Mean?

AI transformation refers to the process of embedding artificial intelligence into the core operations, decision-making, and culture of an organization. It goes far beyond adding a chatbot to your website or automating a few spreadsheets.

True AI transformation means:

- Redesigning workflows around AI-augmented decisions

- Changing how data is collected, stored, and used

- Retraining employees to work alongside AI systems

- Shifting leadership mindset toward data-driven strategy

When done well, AI transformation can cut costs, improve customer experience, identify new revenue streams, and make organizations significantly more agile. When done poorly, it creates legal exposure, ethical disasters, and a profound loss of public trust.

And the difference almost always comes down to governance.

Why Governance — Not Technology — Is the Biggest Challenge

Here is a simple truth that most technology vendors will not tell you: the hard part of AI is not building the model. It is deciding how the model should behave, who gets to decide that, and what happens when it misbehaves.

Governance is the system of rules, roles, processes, and accountability mechanisms that determine how AI is developed, deployed, monitored, and corrected.

Without governance, even the most technically impressive AI system becomes a liability.

Consider what happens when governance is absent:

- A hiring algorithm screens out qualified candidates based on gender or zip code — and nobody catches it for two years because there was no review process.

- A loan approval model denies credit to people in minority neighborhoods — not because anyone intended discrimination, but because the training data reflected historical bias, and no one was tasked with auditing it.

- A government system flags citizens as fraud risks based on opaque criteria — and people have no way to appeal or even find out why they were flagged.

All three of these examples have happened in the real world. All three are governance failures, not technology failures.

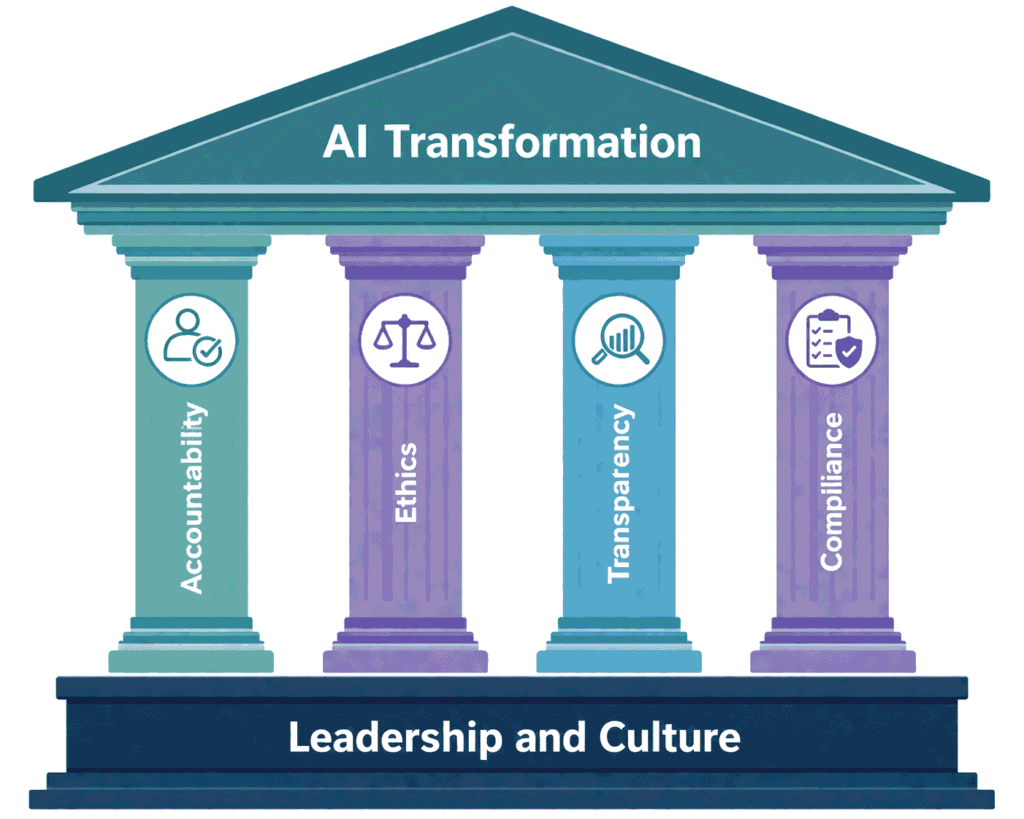

The Four Core Pillars of AI Governance

Building a governance framework for AI transformation does not require a law degree or a philosophy doctorate. It requires clarity on four foundational pillars.

1. Accountability: Who Owns the AI Decision?

Accountability means there is always a human or team that can be held responsible for what an AI system does.

This sounds obvious. In practice, it is one of the most commonly avoided conversations in organizations. AI creates a diffusion of responsibility. The data team says it is a product problem. The product team says it is a legal problem. Legal says it is a technical problem. Meanwhile, no one is in charge.

Effective AI governance assigns clear ownership. Every AI system needs:

- A named decision-maker responsible for the system’s outcomes

- A defined escalation path when the system produces unexpected results

- Regular review cycles with documented findings

Amazon learned this the hard way when it reportedly used an AI recruiting tool that downgraded resumes from women. The system had been trained on historical hiring data that reflected a male-dominated workforce. There was no clear accountability structure to catch and correct this before it caused harm.

2. Ethics: Is the AI Fair and Aligned With Human Values?

Ethics in AI governance means asking whether the system treats people fairly, respects human dignity, and does not perpetuate or amplify harmful biases.

This is not a soft, optional add-on. It is central to whether your AI transformation succeeds or fails.

The ethical questions include:

- Does the AI treat people differently based on race, gender, age, or other protected characteristics?

- Does it make decisions that people cannot understand or challenge?

- Does it optimize for a metric that looks good on paper but causes real-world harm?

A well-known case is the COMPAS algorithm, used in the United States to predict the likelihood of criminal reoffending. Research by ProPublica found that the tool was nearly twice as likely to falsely flag Black defendants as high-risk compared to white defendants. Courts were using this tool in sentencing decisions. The ethical failure had life-altering consequences.

AI ethics boards, bias audits, and diverse development teams are not luxuries. They are necessities.

3. Transparency: Can You Explain What the AI Is Doing and Why?

Transparency means that stakeholders — employees, customers, regulators, and the public — can get a meaningful explanation of how an AI system reaches its conclusions.

This has become increasingly important as AI systems grow more complex. The issue of “black box” AI — systems that produce outputs nobody can fully explain — is a major governance challenge.

The European Union’s AI Act, which came into force in 2024, explicitly requires transparency and explainability for AI systems used in high-risk contexts like healthcare, employment, and law enforcement. Companies operating in Europe that cannot explain their AI decisions face serious regulatory and legal consequences.

Transparency also builds internal trust. Employees who do not understand how an AI system evaluates their work or affects their role become disengaged and resentful. Governance frameworks that require explainability internally, not just externally, tend to produce much better adoption outcomes.

4. Compliance: Does the AI Follow Applicable Laws and Policies?

Compliance means the AI system operates within the boundaries set by laws, regulations, and internal policies.

This is rapidly expanding terrain. In the past three years, major AI regulations have been introduced or passed in the European Union, the United Kingdom, the United States (at state and federal levels), China, Canada, and many other jurisdictions.

Organizations undergoing AI transformation must understand:

- Which regulations apply to their industry and geography

- How data privacy laws (GDPR, CCPA, etc.) interact with their AI systems

- What documentation and audit trails regulators may require

- How quickly the regulatory landscape is changing

The compliance challenge is not just legal. Organizations also have internal policies — around data use, vendor relationships, and customer commitments — that AI systems must honor. Governance frameworks that treat compliance as a living process, not a one-time checkbox, are far better positioned to handle change.

Real-World Examples of AI Governance Success and Failure

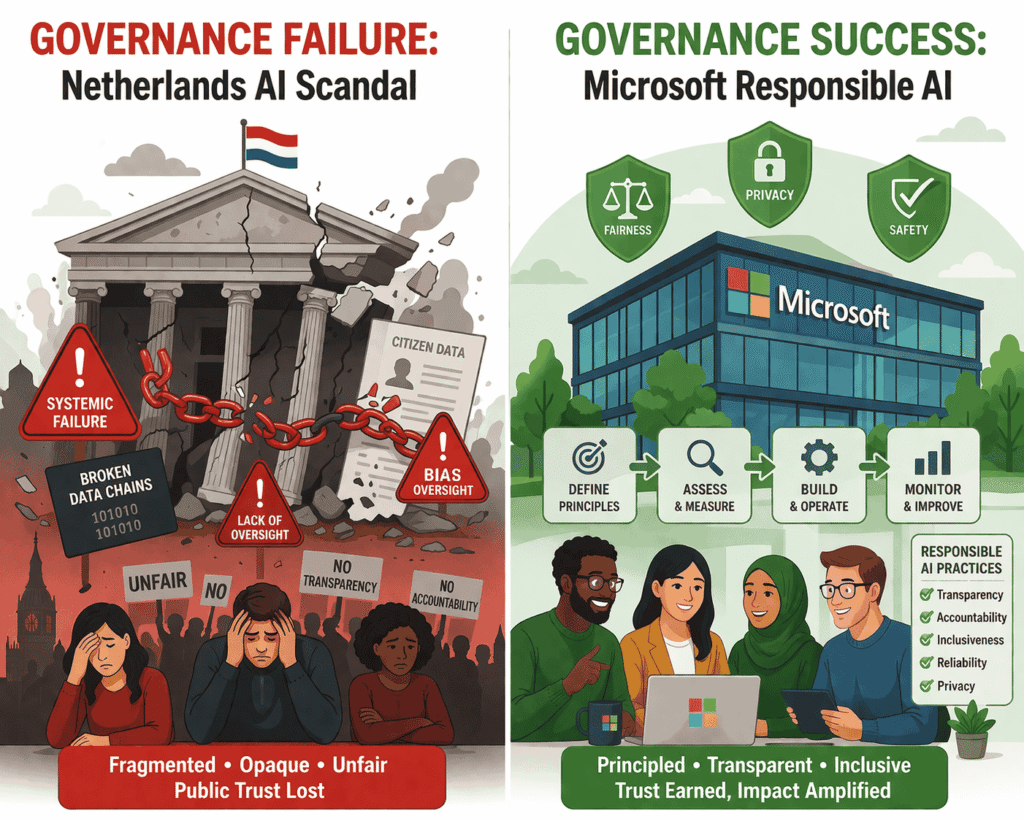

Example 1: The Netherlands Benefits Scandal (Governance Failure)

Between 2013 and 2019, the Dutch tax authority used an AI-assisted fraud detection system to flag families for child welfare benefit fraud. The system was later found to have discriminated heavily against families with dual nationalities, many of whom were from minority backgrounds.

More than 26,000 families were wrongly accused. Some had their benefits clawed back entirely, causing severe financial hardship. The scandal brought down the Dutch government in January 2021.

The failure was not technical. The model worked as designed. The failure was in governance: no ethical review of the training data, no transparency for affected citizens, no accountability mechanism to catch systemic errors, and no compliance check against anti-discrimination law.

Example 2: Microsoft’s Responsible AI Framework (Governance Success)

Microsoft has developed one of the most comprehensive public AI governance frameworks in the technology industry. It includes a set of principles — fairness, reliability, privacy, inclusiveness, transparency, and accountability — backed by actual organizational infrastructure: a Responsible AI team, internal tools for bias detection, and governance gates that AI products must pass before release.

The result is not perfect, but it is systematic. Microsoft’s approach demonstrates that large-scale AI transformation and strong governance are not in conflict. They are mutually reinforcing.

Example 3: Healthcare AI and the FDA Pathway

In the United States, the Food and Drug Administration has developed a regulatory pathway for AI-based medical devices. Companies must demonstrate not just that their AI works, but that it is safe, explainable, and continuously monitored.

This governance structure has enabled a wave of genuinely useful AI in healthcare — from tools that detect diabetic retinopathy in retinal scans to systems that flag early signs of sepsis in ICU patients — while providing guardrails that protect patients.

The healthcare sector shows that strong governance does not kill innovation. It channels it productively.

The Decision-Making Gap: Who Should Be in the Room?

One of the most underappreciated governance challenges is deciding who gets a voice in AI-related decisions.

In most organizations, AI decisions are made almost entirely by technical teams. Engineers and data scientists design the system, choose the training data, set the optimization targets, and define what “success” looks like. Business leaders sign off on cost and timeline. Legal reviews the contract. The product ships.

Missing from this picture: ethicists, social scientists, frontline workers who will interact with the system, representatives of the communities that will be affected, and in many cases, legal or compliance experts who understand the regulatory environment deeply.

This is not an argument against technical expertise. Engineers should absolutely be in the room. But AI transformation decisions — especially ones that affect hiring, lending, criminal justice, healthcare, or public services — are fundamentally decisions about values, fairness, and power. They require diverse perspectives.

Governance frameworks that formalize this inclusion tend to catch problems much earlier, when they are far cheaper to fix.

Actionable Steps: Building AI Governance That Works

Whether you are a startup, a large corporation, or a government agency, these steps will help you build governance that enables AI transformation rather than blocking it.

Start with a governance audit. Before deploying any significant AI system, document what you already have: what data you use, who built the model, who reviews its outputs, and what happens when it fails. Most organizations are surprised to discover how many gaps exist.

Assign clear ownership. Every AI system in production should have a named owner — not a team, a person — who is accountable for its performance, fairness, and compliance. Rotate these roles thoughtfully to avoid burnout without losing continuity.

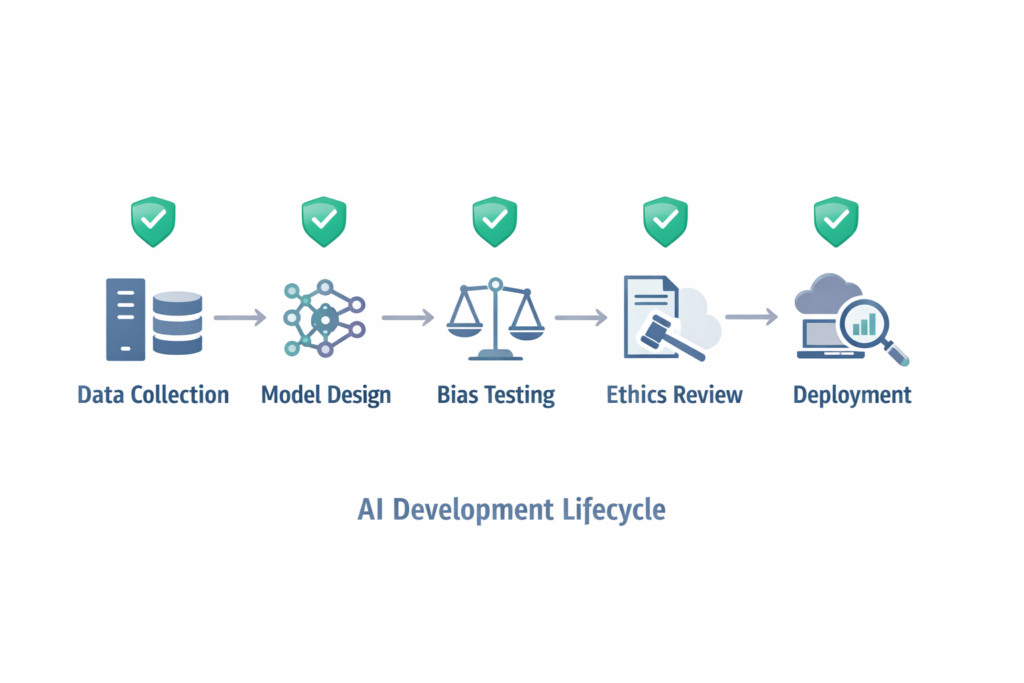

Build ethics review into the development process. Do not wait until the system is built to ask ethical questions. Incorporate ethics checkpoints at the requirements stage, the data selection stage, and the testing stage. Use tools like model cards and datasheets for datasets to document assumptions and limitations.

Create feedback channels. People affected by AI decisions — employees, customers, citizens — should have a clear and accessible way to flag concerns, request explanations, and appeal decisions. This is both a governance requirement and an early warning system.

Stay ahead of regulation. Assign someone in your organization to monitor the AI regulatory landscape. What is optional today may be mandatory next year. Organizations that build governance proactively are far better positioned than those scrambling to retrofit compliance.

Invest in AI literacy across the organization. Governance breaks down when the people implementing it do not understand what AI systems actually do. Broad-based AI education — for leaders, managers, and frontline staff — is a governance investment, not just a training cost.

The Cultural Dimension: Governance Is Not Just a Policy Document

One of the most important things to understand about AI governance is that policies and frameworks only work if the organization’s culture supports them.

You can write the world’s most comprehensive AI ethics policy. If leadership implicitly signals that speed and cost always trump safety, the policy will not be followed when it counts. If employees fear punishment for raising concerns about an AI system’s behavior, problems will go unreported until they become crises.

Strong AI governance requires a culture where:

- Raising ethical concerns is rewarded, not penalized

- Mistakes are examined openly to extract learning, not buried to avoid embarrassment

- Leaders model the behavior they want to see — asking governance questions in public forums, not just delegating them to compliance teams

This cultural dimension is why AI transformation is a problem of governance at every level: technical, organizational, and human.

Conclusion: The Path Forward

The organizations and governments that will thrive in the age of AI are not those with the most advanced models. They are those that have built the structures to deploy AI responsibly, correct it when it fails, and earn the trust of the people it affects.

AI transformation is a problem of governance. This is not a pessimistic statement. It is actually an empowering one. Because governance is something humans control. Unlike the pace of technological change — which can feel overwhelming and unstoppable — governance is something we design, implement, and can improve deliberately.

The technology is ready. The question now is whether our institutions, organizations, and leaders are ready to govern it well. The ones who answer yes — and back it up with real structures, real accountability, and real cultural commitment — will define what AI transformation looks like for the rest of us.

Frequently Asked Questions

Q1: What is AI governance, and why does it matter?

AI governance refers to the set of policies, roles, processes, and accountability structures that guide how AI systems are built, deployed, monitored, and corrected. It matters because without governance, even well-intentioned AI systems can cause significant harm — discriminating against vulnerable groups, making unexplainable decisions, or operating outside legal boundaries. Governance is what makes AI transformation sustainable and trustworthy rather than risky and fragile.

Q2: Is AI governance only relevant for large corporations and governments?

No. Any organization using AI in ways that affect people — whether that is a startup using AI for customer screening, a mid-sized company using it for performance reviews, or a nonprofit using it for resource allocation — needs some form of AI governance. The scale and formality of the governance structure will vary, but the fundamental need for accountability, ethics review, transparency, and compliance does not disappear based on organization size.

Q3: How does poor AI governance lead to bias and discrimination?

AI systems learn from historical data. If that data reflects past discrimination — for example, historical hiring decisions that favored men, or loan approvals that systematically excluded minority communities — the AI will learn and replicate those patterns. Without governance mechanisms like bias audits, diverse development teams, and ongoing monitoring, these patterns go unchecked and can affect thousands or millions of people before anyone notices.

Q4: What is the difference between AI ethics and AI governance?

AI ethics refers to the values and principles that should guide AI — fairness, transparency, human dignity, non-maleficence. AI governance is the practical implementation of those values through organizational structures, policies, roles, and processes. Ethics without governance is aspiration. Governance without ethics is bureaucracy. Effective AI transformation requires both working together.

Q5: What regulations currently apply to AI systems?

The regulatory landscape is evolving rapidly. Key frameworks include the EU AI Act (the most comprehensive AI regulation to date, with a risk-based classification system), the EU General Data Protection Regulation (GDPR) which has significant implications for automated decision-making, various US state-level laws (including the Colorado AI Act and California’s AI-related legislation), and sector-specific rules in healthcare, finance, and law enforcement. Organizations should consult qualified legal counsel to understand which regulations apply to their specific AI use cases and geographies.

Abdullah Zulfiqar is Co-founder and Client Success Manager at RankWithLinks, an SEO agency helping businesses grow online. He specializes in client relations and SEO strategy, driving measurable results and maximizing ROI through effective link-building and digital marketing solutions.