Web scraping has evolved from a niche developer skill into a core business intelligence capability. In 2026, organizations of every size — from solo freelancers to Fortune 500 enterprises — rely on web scraping software to collect real-time data, monitor competitors, track prices, generate leads, and power machine learning pipelines. The tools available today are more capable, more intelligent, and more accessible than ever before.

This guide covers the best-rated web scraping software in 2026, evaluated across performance, ease of use, JavaScript handling, anti-detection capabilities, scalability, pricing, and real-world applicability. Whether you are building your first scraper or scaling an enterprise-grade data operation, this is the only guide you need.

Why Web Scraping Software Matters More Than Ever in 2026

The open web contains more structured and semi-structured data than at any point in history. Product listings, financial data, job postings, news articles, academic papers, government records, customer reviews, and social media content are all publicly accessible — and all of it holds potential business value.

Manual data collection simply cannot keep up. A human researcher might gather a few hundred records in a day. A well-configured web scraper can collect millions of records in the same timeframe, consistently, without fatigue or error.

In 2026, several trends have made choosing the right web scraping software even more critical than before.

Websites have become significantly harder to scrape. Advanced bot detection systems, AI-powered CAPTCHAs, browser fingerprinting, behavioral analysis, and TLS fingerprinting are now standard defenses on major platforms. Only the best scraping tools keep pace with these countermeasures.

Artificial intelligence has deeply integrated into scraping workflows. Modern tools use AI to auto-detect data fields, interpret unstructured content, adapt to website layout changes, and intelligently retry failed requests. The gap between AI-enhanced scrapers and legacy tools is widening fast.

Data compliance requirements have tightened globally. Responsible scraping in 2026 means navigating privacy regulations, terms of service agreements, and ethical data collection standards more carefully than ever.

With all of this in mind, here is a comprehensive, fully updated breakdown of the best-rated web scraping software in 2026.

Key Criteria for Evaluating Web Scraping Software in 2026

Before examining individual tools, it is essential to understand what separates good scraping software from great scraping software in the current landscape.

JavaScript and Single-Page Application Support: The vast majority of modern websites render content dynamically through JavaScript frameworks like React, Next.js, Vue, and Angular. Any scraping tool worth using in 2026 must handle these environments natively and reliably.

Anti-Bot Bypass Capabilities: Websites deploy increasingly sophisticated bot detection systems from providers like Cloudflare, Akamai, DataDome, and PerimeterX. The best tools integrate residential and mobile proxy rotation, CAPTCHA solving, browser fingerprint spoofing, and human behavior simulation to maintain high success rates.

AI-Assisted Extraction: In 2026, top-tier platforms use large language models and computer vision to automatically identify data fields, adapt to structural changes on target websites, and classify unstructured content into clean, usable data formats.

Scalability and Cloud Infrastructure: The ability to run thousands of parallel scraping jobs across distributed cloud infrastructure, with automatic retry logic, job scheduling, and bandwidth management, separates hobbyist tools from professional-grade platforms.

Integration Ecosystem: The best scraping tools connect directly with data pipelines, business intelligence platforms, CRMs, cloud storage, databases, and automation tools like Zapier, Make, and n8n.

Ease of Use for Non-Technical Users: Visual no-code interfaces, pre-built templates, and guided workflows have become table stakes for platforms targeting business users rather than developers.

Pricing Transparency and Value: With scraping platforms ranging from free open source frameworks to six-figure enterprise contracts, understanding the value delivered at each pricing tier is critical.

The Best-Rated Web Scraping Software in 2026

1. Bright Data

Bright Data remains the undisputed leader in enterprise web data infrastructure in 2026. What began as primarily a proxy network has evolved into a fully integrated web data platform that covers every stage of the data collection pipeline — from raw crawling to structured, delivery-ready datasets.

The Bright Data platform in 2026 includes a Web Scraper IDE with AI-assisted field detection, a dataset marketplace with hundreds of ready-to-purchase datasets updated in near real time, a Scraping Browser that fully manages anti-bot bypassing on behalf of the user, and a proxy network with over 100 million residential, mobile, ISP, and datacenter IP addresses spanning every country on earth.

The Scraping Browser deserves special attention. Rather than requiring users to manage proxy rotation, CAPTCHA solving, and browser fingerprinting manually, the Scraping Browser handles all of this automatically at the infrastructure level. Developers simply send requests through it as they would with any headless browser, and Bright Data’s systems silently handle all the anti-detection complexity in the background. This represents a major leap forward in scraping reliability for complex targets like social media platforms, travel sites, and major retail marketplaces.

Bright Data has also significantly expanded its AI-powered data extraction capabilities. The platform can now parse and structure content from pages that undergo frequent layout changes, automatically adapting extraction logic without requiring manual reconfiguration by the user.

For enterprises that need guaranteed delivery of specific datasets on a schedule, Bright Data’s managed dataset service allows customers to define their data requirements and receive clean, structured outputs without managing any scraping infrastructure themselves.

Pricing is consumption-based and ranges from accessible entry tiers for small teams to fully custom enterprise agreements. It is not the cheapest option, but for organizations where data reliability and scale are non-negotiable, Bright Data justifies every dollar.

Best for: Enterprise data operations, e-commerce intelligence, financial data collection, large-scale scraping on protected websites.

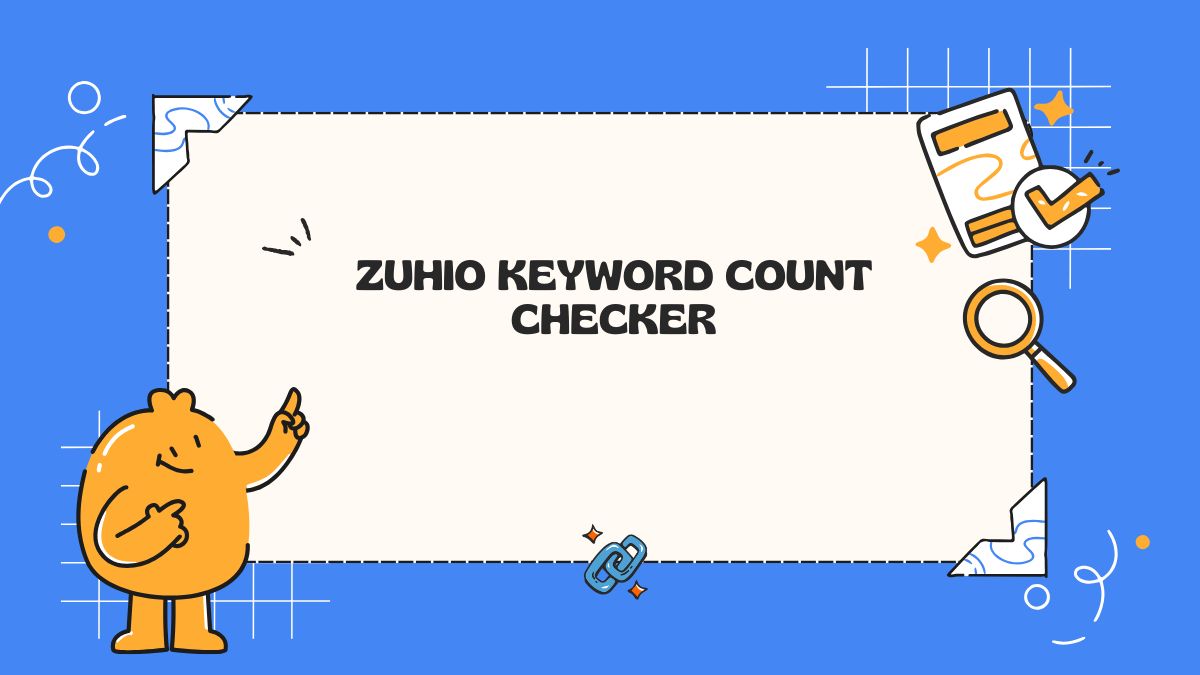

2. Apify

Apify has firmly established itself as the most developer-friendly web scraping and automation platform in 2026. Its combination of cloud infrastructure, an open source scraping framework, a rich Actor marketplace, and deep integration capabilities make it one of the most versatile tools available for both individual developers and engineering teams.

The Apify platform is built around the concept of Actors — containerized scraping and automation programs that run on Apify’s cloud infrastructure. The Actor Store now contains thousands of community-built and officially maintained scrapers for virtually every major website and data source on the internet. Users can deploy these immediately without writing any code, or they can build and publish their own Actors for internal use or public monetization.

Apify’s open source Crawlee library, which powers much of the platform’s scraping functionality, has continued to evolve and is now one of the most capable web crawling libraries available for both JavaScript and Python developers. Crawlee handles browser automation, HTTP crawling, proxy rotation, request queuing, and error handling with minimal configuration.

The platform’s integration story is particularly strong in 2026. Native connections to Zapier, Make, Slack, Google Sheets, Amazon S3, Snowflake, and dozens of other tools make it straightforward to pipe scraped data directly into business workflows without any custom engineering.

Apify has also made meaningful investments in its AI extraction capabilities. The platform now includes AI-powered auto-extraction modes where users can describe in plain English what data they want to collect, and the system generates and executes the appropriate scraping logic automatically. This dramatically lowers the technical barrier for new users while still preserving the deep customization options that experienced developers depend on.

The free tier remains genuinely useful for small projects, and paid plans scale gracefully from individual developers to large teams.

Best for: Developers and engineering teams, workflow automation, businesses that want cloud-hosted scraping with marketplace flexibility.

3. Octoparse

Octoparse continues to be the most highly rated no-code web scraping tool in 2026, consistently praised for its intuitive interface, reliable performance, and accessible pricing. It is the go-to recommendation for business analysts, researchers, and non-technical professionals who need to extract web data without writing code.

The platform has made significant improvements to its AI-assisted extraction engine. In 2026, Octoparse can automatically detect and map data fields on most standard websites with a single click, dramatically reducing the time required to configure new scraping projects. Users can review and adjust the auto-detected fields through the visual interface, making the process feel closer to editing than building from scratch.

Octoparse supports JavaScript-rendered pages, infinite scroll, pagination, login-required pages, dropdown interactions, and multi-step workflows. Its cloud execution mode allows scraping jobs to run continuously on Octoparse’s servers, with results delivered to Excel, CSV, Google Sheets, databases, or via API.

The template library has expanded considerably and now covers hundreds of popular websites across e-commerce, real estate, job boards, social media, news, and business directories. For users whose target websites are covered by existing templates, getting started takes just a few minutes.

Octoparse has also added scheduled scraping with smart change detection in recent updates, meaning it can automatically alert users or trigger downstream actions when specific data on a monitored page changes. This is particularly useful for price monitoring, inventory tracking, and news surveillance use cases.

For users who need more power than Octoparse’s standard no-code interface provides, the platform now includes a hybrid mode that allows developers to inject custom JavaScript at specific points in a scraping workflow, bridging the gap between no-code accessibility and developer-level control.

Best for: Non-technical users, business analysts, small to mid-size data collection projects, template-based scraping.

4. Scrapy

Scrapy remains the definitive open source web scraping framework for Python developers in 2026. After more than a decade of continuous development, it is battle-tested, extremely performant, and supported by one of the most active developer communities in the data engineering space.

What makes Scrapy so enduringly popular is its combination of speed, flexibility, and maturity. Its asynchronous architecture allows it to handle enormous volumes of concurrent requests with minimal resource consumption. The middleware system provides deep control over request handling, response processing, proxy rotation, cookie management, and error recovery. The item pipeline system makes it straightforward to clean, validate, deduplicate, and store extracted data in virtually any format or destination.

The Scrapy ecosystem has continued to expand. Integration with Playwright has become standard practice for projects requiring JavaScript rendering, giving Scrapy users access to full browser automation capabilities without abandoning the framework. Scrapy-Splash remains an option for lighter JavaScript handling, though Playwright is now generally preferred for complex dynamic sites.

In 2026, Scrapy has also benefited from the maturation of AI-assisted development tools. Developers using AI coding assistants can scaffold entire Scrapy spiders from natural language descriptions in minutes, significantly accelerating project setup even for complex multi-site crawling operations.

Scrapy is completely free and open source, making it one of the highest-value tools in this entire guide. The primary investment is time — learning the framework properly takes effort, but the payoff in capability and performance is substantial.

Best for: Python developers, large-scale custom crawling, data engineers building production scraping pipelines.

5. Playwright

Microsoft’s Playwright has become the browser automation library of choice for web scraping in 2026, overtaking Puppeteer as the preferred option among professional developers. Its cross-browser support, robust API, and excellent reliability on modern JavaScript-heavy websites make it an essential tool in any serious scraper’s toolkit.

Playwright controls real browser instances — Chromium, Firefox, and WebKit — making it extremely difficult for websites to distinguish Playwright-driven requests from genuine human browsing. This is critical in 2026, where many high-value scraping targets deploy sophisticated browser fingerprinting defenses that reject requests from obviously automated clients.

The library supports all the interactions required for complex scraping scenarios: clicking, scrolling, form filling, file uploading, keyboard input, hover states, shadow DOM traversal, network interception, and multi-tab navigation. It handles authentication flows, single-page applications, and WebSocket-based content reliably.

Playwright is available for JavaScript and TypeScript, Python, Java, and .NET, making it accessible across a wide range of engineering environments. Its test infrastructure origins mean it has excellent built-in tooling for debugging, tracing, and diagnosing scraper failures.

In 2026, the Playwright ecosystem has grown to include specialized scraping-oriented extensions and configurations that optimize it further for data collection use cases, including stealth plugins that further reduce browser fingerprint detectability.

Playwright is free and open source. It is most powerful when combined with a scraping framework like Scrapy or an orchestration platform like Apify for managing scale, scheduling, and data output.

Best for: Advanced developers, JavaScript-heavy and interaction-dependent websites, custom browser automation scraping.

6. Diffbot

Diffbot occupies a unique and increasingly valuable position in the web scraping landscape in 2026. While other tools focus on helping users extract data from specific pages they define, Diffbot uses artificial intelligence and computer vision to automatically understand and structure any web page it encounters — without requiring any manual configuration.

Feed Diffbot a URL, and its AI models will determine what type of page it is (article, product listing, company profile, job posting, discussion thread, and so on), extract all relevant fields automatically, and return clean, structured JSON data. No CSS selectors, no XPath expressions, no template building. The system simply reads and interprets the page the way a human would.

This approach scales remarkably well. Diffbot’s Automatic API can process millions of pages across completely different website designs and return consistently structured data, because the extraction logic is driven by content understanding rather than site-specific templates.

Diffbot’s Knowledge Graph, which now contains billions of structured facts about companies, people, products, and locations continuously extracted from across the web, has become a commercially significant data product in its own right. Organizations that need a continuously updated, structured view of the business world — for applications like competitive intelligence, investment research, and CRM enrichment — find Diffbot’s Knowledge Graph uniquely valuable.

The platform is priced at a premium level that reflects its advanced AI capabilities and the significant infrastructure behind it. It is not the right tool for every scraping project, but for organizations with sophisticated, large-scale data intelligence requirements, Diffbot offers capabilities that no other platform on this list can fully match.

Best for: AI-driven automatic data extraction, enterprise intelligence, knowledge graph applications, organizations that need structured data without manual configuration.

7. ParseHub

ParseHub continues to be one of the most reliable and capable no-code scraping tools for intermediate users in 2026. It occupies a compelling middle ground between the simplicity of beginner tools and the power of developer frameworks.

ParseHub’s machine learning-based extraction engine excels at handling complex, multi-level website structures. It can navigate nested data relationships — for example, extracting a list of products, clicking into each product page, extracting detailed specifications, and then following links to related items — all through a visual configuration interface rather than code.

The tool handles JavaScript-rendered content, AJAX-loaded data, login authentication, and paginated datasets effectively. Its conditional logic system allows users to build sophisticated extraction workflows that adapt to different page states without requiring programming knowledge.

ParseHub added more robust scheduling and monitoring features in its most recent updates, making it a more complete solution for ongoing data collection projects that need to run automatically and reliably over time.

The free tier supports a meaningful number of pages per run, which is generous enough for small research projects. Paid plans unlock parallel scraping, cloud execution, and higher data volumes at competitive price points.

Best for: Intermediate users, multi-page scraping projects, users who need more power than basic no-code tools but do not want to write code.

8. Zyte (Formerly Scrapinghub)

Zyte has continued to mature into one of the most respected managed web scraping platforms in 2026, particularly among enterprise clients and engineering teams that want professional scraping infrastructure without the overhead of building it in-house.

Zyte’s flagship product, the Zyte API, provides a single unified endpoint for web data extraction that automatically handles JavaScript rendering, proxy rotation, CAPTCHA solving, and anti-bot countermeasures. Developers send a URL and specify the data they need, and Zyte handles all the complexity of reliably fetching and parsing that page.

The platform’s AI-powered Smart Browser combines the reliability of a managed proxy network with intelligent session management and behavioral simulation, achieving high success rates on even the most protected websites.

Zyte also offers Scrapy Cloud, a hosted deployment platform for Scrapy spiders that provides scheduling, monitoring, job management, and data storage for production crawling operations. For teams already using Scrapy, Scrapy Cloud is a natural extension that eliminates the need to manage crawling infrastructure independently.

Zyte’s data extraction API has been enhanced with AI field detection, meaning developers can now specify what data they want in plain language and the system will attempt to extract it automatically, falling back to manual selector configuration when needed.

Best for: Engineering teams running Scrapy in production, businesses that want managed anti-bot handling, organizations seeking a single API for reliable web data access.

Specialized Web Scraping Tools Worth Knowing in 2026

Beyond the main platforms covered above, several specialized tools deserve recognition for excelling in specific scraping contexts.

Crawlee is the open source foundation underlying much of Apify’s infrastructure. Available as a standalone library for JavaScript and Python developers, it is one of the most capable and actively maintained scraping libraries available. Teams building custom scraping applications from the ground up consistently rate it highly.

Selenium remains relevant in 2026 primarily in environments where legacy compatibility is required. For new projects, Playwright has largely superseded it due to superior performance and reliability, but Selenium’s massive installed base and extensive documentation mean it continues to see significant use.

Beautiful Soup and lxml are Python libraries rather than complete scraping solutions, but they remain indispensable for parsing HTML and XML in custom scraping scripts. Combined with the httpx or requests library for fetching pages, they form the foundation of countless lightweight scraping projects.

ScrapingBee offers a simple, proxy-backed API for rendering JavaScript pages and bypassing bot detection, aimed at developers who want anti-bot handling without the complexity of managing Bright Data or Zyte’s full platforms. It is well-rated for mid-scale projects with straightforward scraping requirements.

Web Scraping Software for Specific Use Cases in 2026

Different industries and applications have distinct scraping requirements. Here is how the best tools map to common real-world use cases.

E-commerce Price Monitoring: Bright Data and Octoparse are the top choices. Bright Data for enterprise-scale monitoring across major retail platforms with sophisticated bot defenses, and Octoparse for small to mid-size operations using its e-commerce templates.

Lead Generation and Sales Intelligence: Apify’s Actor marketplace includes highly rated scrapers for LinkedIn, Google Maps, business directories, and company websites. For fully automated lead database enrichment, Diffbot’s Knowledge Graph is a premium but powerful option.

Real Estate Data Collection: Octoparse and ParseHub are well-suited for extracting property listings, pricing history, and neighborhood data from real estate platforms. For large-scale, multi-market monitoring, Bright Data or Zyte are better positioned.

News and Media Monitoring: Diffbot’s Article API excels at extracting clean, structured article content from thousands of news sources automatically. For more targeted monitoring of specific sources, Apify and Scrapy are strong choices.

Financial and Market Data: Scrapy and Playwright combinations are preferred by financial data engineering teams for their performance, flexibility, and control. For managed solutions, Bright Data’s financial data products offer compliance-friendly collection options.

Academic Research: ParseHub, Octoparse, and Scrapy are all popular in academic settings depending on the researcher’s technical background. The free tiers of these tools make them especially attractive for research budgets.

Machine Learning Dataset Creation: Scrapy, Crawlee, and Apify are the primary tools used by ML engineering teams building large-scale web datasets for model training. Their scalability and flexible output options align well with data pipeline requirements.

Anti-Detection and Responsible Scraping in 2026

The cat-and-mouse dynamic between web scrapers and anti-bot systems has escalated significantly by 2026. Understanding both sides of this equation is essential for anyone building a serious scraping operation.

Modern anti-bot systems analyze dozens of signals to distinguish scrapers from real users. These include TLS handshake fingerprints, browser canvas fingerprints, WebGL rendering signatures, mouse movement patterns, scroll behavior, timing between requests, cookie handling, HTTP/2 header ordering, and IP reputation scores. A scraper that passes one or two of these checks but fails others will be identified and blocked.

The most effective approach in 2026 combines residential proxies with genuine browser execution through Playwright or a managed browser service like Bright Data’s Scraping Browser. This combination is extremely difficult to distinguish from real human traffic because it uses real browser instances with authentic fingerprints, running on real residential IP addresses.

On the responsible scraping side, it is more important than ever to understand the legal and ethical landscape. Terms of service violations, privacy regulation breaches, and excessive server load are real risks that can result in legal action, IP bans, or reputational damage.

Best practice in 2026 means always reviewing target website terms of service, honoring robots.txt directives, rate limiting requests to avoid causing server strain, avoiding the collection of personally identifiable information without a lawful basis, and storing scraped data securely with appropriate access controls.

Pricing Overview: What to Expect in 2026

Web scraping tools in 2026 span an enormous price range. Here is a realistic overview of what different budget levels unlock.

Free and open source tools including Scrapy, Playwright, Crawlee, Beautiful Soup, and the free tiers of Apify and Octoparse are suitable for learning, personal projects, and small-scale data collection. The main cost here is developer time rather than licensing fees.

Mid-range platforms in the range of roughly twenty to three hundred dollars per month, including Apify’s paid plans, Octoparse’s cloud tiers, ParseHub’s paid options, and ScrapingBee, offer cloud execution, higher data volumes, scheduling, and better support. These are well-suited for small businesses, freelancers, and research teams.

Enterprise platforms including Bright Data, Diffbot, and Zyte operate at significantly higher price points, typically starting at several hundred dollars per month and scaling into thousands for high-volume operations. These platforms are justified when data reliability, scale, and support are business-critical requirements.

Frequently Asked Questions About Web Scraping Software in 2026

Is web scraping legal in 2026?

Web scraping publicly available data is generally legal in most jurisdictions, though the legal landscape continues to evolve. Court decisions in major markets have generally supported the legality of scraping publicly accessible information, but violating terms of service, scraping behind authentication walls without permission, or collecting personal data in violation of privacy regulations can create legal liability. Always consult legal counsel for specific situations.

Which web scraping tool is best for beginners in 2026?

Octoparse and ParseHub are the most beginner-friendly options. Both offer visual interfaces, pre-built templates, and free plans that allow new users to start extracting data within minutes without any programming knowledge.

Can web scraping software handle websites protected by Cloudflare?

Yes, but it requires the right tools. Bright Data’s Scraping Browser, Zyte API, and Apify with appropriate proxy configurations are all capable of successfully scraping Cloudflare-protected websites in most scenarios. Basic scrapers without anti-detection capabilities will be blocked.

What is the fastest web scraping framework in 2026?

Scrapy remains one of the fastest options for HTTP-based scraping due to its asynchronous architecture. For browser-based scraping, Playwright with optimized concurrency settings delivers strong performance. For managed speed at scale, Bright Data’s infrastructure handles parallel scraping at rates that are difficult to match with self-hosted solutions.

Do I need proxies for web scraping?

For scraping a single website occasionally, proxies may not be necessary. For any scraping at meaningful scale, or for scraping websites with bot detection, rotating residential or datacenter proxies are essential to avoid IP bans and maintain data collection continuity.

Final Verdict: The Best Web Scraping Software in 2026

The right web scraping software for you in 2026 depends on your technical background, the complexity of your target websites, the scale of your data needs, and your budget.

For non-technical users and business analysts, Octoparse is the best-rated no-code option with the most complete feature set at an accessible price. For intermediate users who need more power without code, ParseHub delivers exceptional value.

For developers building custom scraping solutions, Scrapy combined with Playwright is the most powerful open source combination available. For developers who want cloud infrastructure without giving up code control, Apify is the strongest platform in its class.

For enterprises that need guaranteed data delivery at scale from the most protected websites on the internet, Bright Data is the clear market leader. For organizations that need AI-driven, automatically structured data without manual configuration, Diffbot is unmatched.

And for engineering teams that want a fully managed scraping API with excellent anti-bot handling and production reliability, Zyte stands as one of the most professional options in the market.

Web data is one of the most valuable assets a business can possess in 2026. The tools in this guide exist to help you collect it efficiently, reliably, and at whatever scale your ambitions demand. Choose the right one for your needs, invest in understanding it properly, and the data advantage you gain will compound over time.

Usman Hakim is an SEO specialist at RankWithLinks, focusing on link building and organic growth. He helps brands improve search rankings through white-hat strategies, including guest posting and authority backlinks.